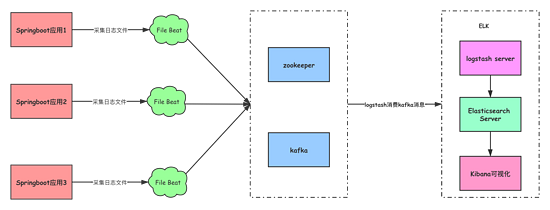

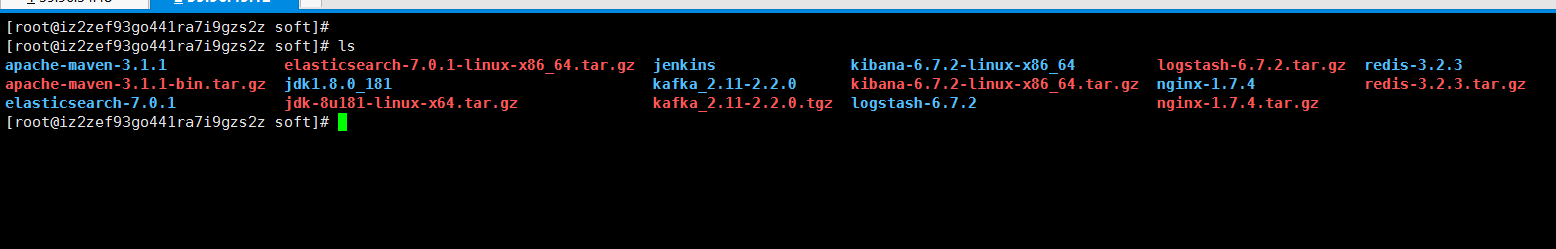

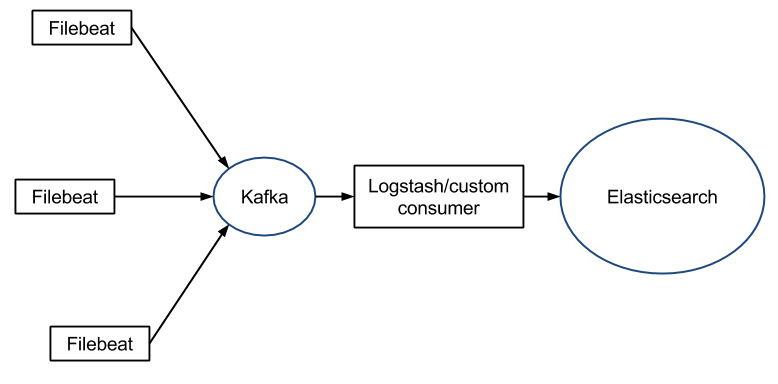

Kafka/Zookeeper docker-composeįollowing is the content of docker-composr.yml which correspond for running zookeeper and kafka. Please clone it and follow the below steps. kubectl exec into pod and test modules enabled or not. Delete readiness probe from daemonset than pods and containers run. All the source codes which relates to this post available on beats gitlab repo. Just install 6.8.11-SNAPSHOT with output Kafka configuration. In this post I’m gonna show how I have integrated filebeat with kafka to take the logs from different services. Now the latest version of filebeat supports to output log file data directly to kafka.

Then kibana will display them on the dashboard. Logstash filter and publish the to elasticsearch. Filebeat listen for new contents of the log files and publish them to logstash. Using Scalyr Connector for Custom Applications There are times when an application directly writes logs to a Kafka topic. The Scalyr Kafka Connector automatically sends the message, logfile, and host name fields from the Filebeat message.

Normally filebeat integrates with logstash on ELK stack. The Kafka Scalyr Connector supports Filebeats out of the box with minimal configuration. It achieve this behavior because it stores the delivery state of each event in the registry file. Filebeat guarantees that the contents of the log files will be delivered to the configured output at least once and with no data loss. What do you have in those files? Right now you have filebeat collecting logs on your servers, sending the data to kafka and logstash consumes the data from those kafka topics, logstash process the logs and apply some filters, all those filters will be lost when you start to send the data directly to elasticsearch and depending on what you do with this data this can break some things on your side.īefore migrating you need to check if the filters that logstash apply to your messages can be replicated in Elasticsearch using an Ingest Pipeline, not everything that Logstash can do is possible with an ingest pipeline.We can configure filebeat to extract log file contents from local/remote servers. There are some parser files on the kafka server, would it be in those? I have talked to support and have had them help me with several processes, but there is a limit to what they can do because of the version and the need for professional services, which our management simply wont do. This is one of the files, the others are similar with their own configs but nothing sticking out that points to anything other than the zookeeper1/2/3 #- nfīootstrap_servers => "$"] Logstash servers have this in the /etc/logstash/ directoryĪnd other config files for startup, java and log4j Logstash ttings /etc/logstash/ -r -f /opt/logstash-parsers/parsers/ti-logstash/ -l /opt/share/logs/ti-logstash/ -w 1Įtc,etc for each server type we are running filebeats on. This is what is being used to start logstash Logstash ttings /etc/logstash/ -r -f /opt/logstash-parsers/parsers/dl-logstash/ -l /opt/share/logs/dl-logstash/ -w 1

I don't see any other configuration files or settings anywhere on these 3 kafka servers. Kafka doesn't appear to have anything really going on with it. The servers have filebeats installed with a pretty simple config, this is an example of one of them. 3 running elasticsearch and kibana (elastic clients) and the remaining 3 are elasticsearch only (data nodes)Įlasticsearch generates index files on named "logstash-TOPIC-YEAR.NUM" and all of the servers get their logs pushed into the single index of their topic (gk, iis, etc) Server -> kafka -> logstash -> elasticsearchĮach server has filebeats running, kafka has 3 servers only running kafka, logstash has 3 servers only running logstash, elasticsearch has 6 servers. With our current full stack setup the logs are like this, which I think is normal. However, elastic cloud apparently drops Kafka and Logstash from the stack and I have to make changes to filebeats to point directly to elasticsearch. We are closing all of our on-prem sites down. This section shows how to set up Filebeat modules to work with Logstash when you are using Kafka in between Filebeat and Logstash in your publishing. I am in the process of moving from an on-prem full ELK stack to Elastic hosted services. First, I am new to all of this and have next to no knowledge of how all of our systems were setup originally as I took this over when the person in charge of it left the company.ĮLK 6.8.12, Filebeats 6.4 (yes, old, it will be upgraded after the move)

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed